Watermarking has been floated by Big Tech as one of the most promising methods to combat the escalating AI misinformation problem online. But so far, the results don’t seem promising, according to experts and a review of misinformation conducted by NBC News.

Adobe’s general counsel and trust officer Dana Rao wrote in a February blog post that Adobe’s C2PA watermarking standard, which Meta and other Big Tech companies have signed onto, would be instrumental in educating the public about misleading AI.

“With more than two billion voters expected to participate in elections around the world this year, advancing C2PA’s mission has never been more critical,” Rao wrote.

The technologies are only in their infancy and in a limited state of deployment but, already, watermarking has proven to be easy to bypass.

Many contemporary watermarking technologies meant to identify AI-generated media use two components: an invisible tag contained in an image’s metadata and a visible label superimposed on an image.

But both invisible watermarks, which can take the form of microscopic pixels or metadata, and visible labels can be removed, sometimes through rudimentary methods such as screenshotting and cropping.

So far, major social media and tech companies have not strictly mandated or enforced that labels be put on AI-generated or AI-edited content.

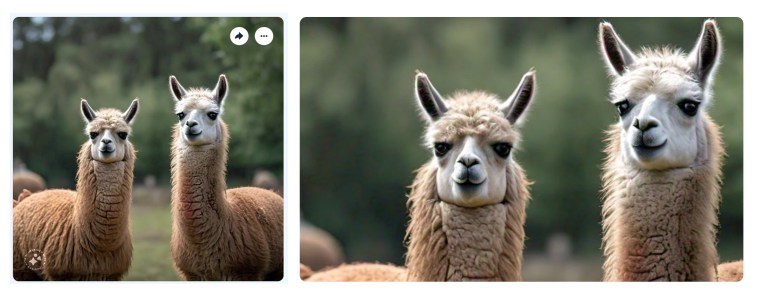

The vulnerabilities of watermarking were on display Wednesday when Meta CEO Mark Zuckerberg updated his cover photo on Facebook with an AI-generated image of llamas standing on computers. It was created with Meta’s AI image generator Imagine that launched in December. The generator is supposed to produce images with built-in labels, which show up as a tiny symbol in the bottom left corner of images like Zuckerberg’s llamas.

But on Zuckerberg’s AI-generated llama image, the label wasn’t visible to users logged out of Facebook. It also wasn’t visible unless you clicked on and opened Zuckerberg’s cover photo. When NBC News created AI-generated images of llamas with Imagine, the label could easily be removed by screenshotting part of the image that didn’t have the label in it. According to Meta, the invisible watermark is carried over in screenshots.

In February, Meta announced it would begin identifying AI-generated content through watermarking technology and labeling AI-generated content on Facebook, Instagram and Threads. The watermarks Meta uses are contained in metadata, which is invisible data that can only be viewed with technology built to extract it. In its announcement, Meta acknowledged that watermarking isn’t totally effective and can be removed or manipulated in bad faith efforts.

The company said it will also require users to disclose whether content they post is AI-generated and “may apply penalties” if they don’t. These standards are coming in the next several months, Meta said.

AI watermarks can even be removed if a user doesn’t intend to. Sometimes uploading photos online strips the metadata from them in the process.

The visible labels associated with watermarking pose further issues.

“It takes about two seconds to remove that sort of watermark,” said Sophie Toura, who works for a U.K. tech lobbying and advocacy firm called Control AI, which launched in October 2023. “All these claims about being more rigorous and hard to remove tend to fall flat.”

A senior technologist for the Electronic Frontier Foundation, a digital civil liberties nonprofit group, wrote that even the most robust and sophisticated watermarks can be removed by someone who has the skill and desire to manipulate the file itself.

Aside from stripping watermarks, they can also be replicated, opening up the possibility of false positives to imply unedited and real media is actually AI-generated.

The companies that have committed to cooperative watermarking standards are major players such as Meta, Google, OpenAI, Microsoft, Adobe and Midjourney. But there are thousands of AI models available to download and use on app stores like Google Play and websites like Microsoft’s GitHub that aren’t beholden to watermarking standards.

For Adobe’s C2PA standard, which has been adopted by Google, Microsoft, Meta, OpenAI, major news outlets including NBCU News Group, and major camera companies, images are intended to automatically have a watermark paired with a visible label called “content credentials.”